HAProxy Load Balancing

Contents

HAProxy Load Balancing

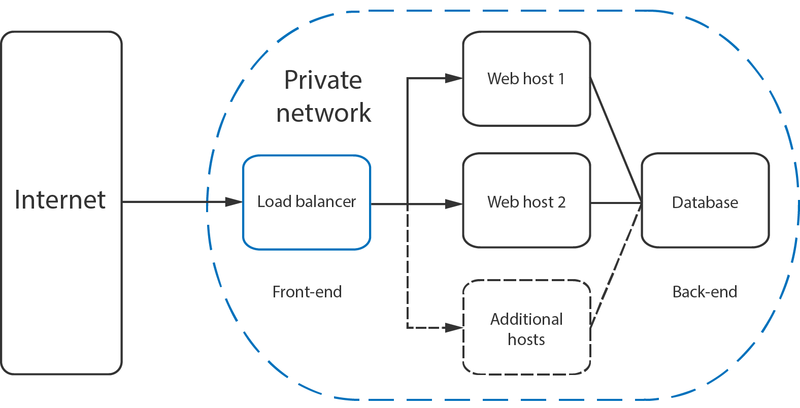

Are you looking for a solution for load balancing your website across multiple nodes? Here is an example using HAProxy on CentOS 7 Cloud nodes, so we can easily add additional webnodes if/when required.

For this example I will be using a 4 cloud servers, haproxy/web01/web02/database server

- Haproxy Server

- Web01

- Web02

- Database Server (However not shown in this configuration)

HAProxy 1.7 on CentOS Install

I will not be installing anything besides haproxy on this node, to reduce resource consumption.

yum install gcc pcre-static pcre-devel openssl-devel htop vim openssl mod_ssl -y

Now lets install HAProxy 1.7 from source

wget https://www.haproxy.org/download/1.7/src/haproxy-1.7.9.tar.gz tar xzvf haproxy-1.7.9.tar.gz cd haproxy-1.7.9

The next command has added support for openssl, as later this will be used for https connections and I recommended setting this up now, so it's there when needed.

make TARGET=linux2628 USE_PCRE=1 USE_OPENSSL=1 USE_ZLIB=1 USE_CRYPT_H=1 USE_LIBCRYPT=1 make install

Create a few needed directories and files

mkdir -p /etc/haproxy mkdir -p /var/lib/haproxy touch /var/lib/haproxy/stats

Create a symbolic link for the binary to allow you to run HAProxy commands as a normal user.

ln -s /usr/local/sbin/haproxy /usr/sbin/haproxy

If you want to add the proxy as a service to the system, copy the haproxy.init file from the examples to your /etc/init.d directory. Change the file permissions to make the script executable and then reload the systemd daemon.

cp ./example/haproxy.init /etc/init.d/haproxy chmod 755 /etc/init.d/haproxy

Now we need to reload the damon and make sure HAProxy starts if the server ever gets rebooted

systemctl daemon-reload chkconfig haproxy on useradd -r haproxy

That went pretty easy and fast, now comes the fun part of configuring the server

Let's Configure the Firewall for traffic and stats port

firewall-cmd --permanent --zone=public --add-service=http firewall-cmd --permanent --zone=public --add-service=https firewall-cmd --reload

Setting up HAProxy for load balancing is a quite straight forward process. Basically, all you need to do is tell HAProxy what kind of connections it should be listening for and where the connections should be relayed to. This is done by creating a configuration file /etc/haproxy/haproxy.cfg with the defining settings. You can read about the configuration options at HAProxy documentation page if you wish to find out more.

Configuring HAProxy for Load Balancing Layer 7

vi /etc/haproxy/haproxy.cfg

Add the following for a basic configuration, which will get everything up and running without https/port 443 support.

global log /dev/log local0 log /dev/log local1 notice chroot /var/lib/haproxy stats timeout 30s user haproxy group haproxy daemon defaults log global mode http option httplog option forwardfor option dontlognull timeout connect 5000 timeout client 50000 timeout server 50000 frontend http_front bind *:80 stats uri /haproxy?stats default_backend http_back backend http_back balance roundrobin server web01 255.255.255.1:80 check server web02 255.255.255.2:80 check

This defines a layer 4 load balancer with a front-end name http_front listening to the port number 80, which then directs the traffic to the default backend named http_back.

The additional stats URI /haproxy?stats enables the statistics page at that specified address.

After making the configurations, save the file and restart HAProxy with the next command.

systemctl restart haproxy

HAProxy Troubleshooting

We ran into a few issues at first with spellings, with this configuration everything is dumped into /var/log/messages

High Availability

The layer 4 and 7 load balancing setups described before both use a load balancer to direct traffic to one of many backend servers. However, your load balancer is a single point of failure in these setups; if it goes down or gets overwhelmed with requests, it can cause high latency or downtime for your service.

A high availability (HA) setup is an infrastructure without a single point of failure. It prevents a single server failure from being a downtime event by adding redundancy to every layer of your architecture. A load balancer facilitates redundancy for the backend layer (web/app servers), but for a true high availability setup, you need to have redundant load balancers as well.